Robotic Soccer Players Becoming More Skillful, Thanks to Google DeepMind's Learning Method

Experts at Google's DeepMind division have applied a learning method to miniature robots that could in the future be used to train humanoid robots designed to assist humans.

For years, researchers in artificial intelligence (AI) and robotics have been striving to create a general intelligence that enables robots to navigate the physical world with the same skill, agility, and understanding as animals or humans. The development, which has been years in the making, has recently focused on deep reinforcement learning (DLR).

The progress of their work was showcased in a soccer match played by robots. While four-legged robots have shown remarkable skill in playing soccer and ball control, their bipedal counterparts still prove clumsy. This is primarily due to the basic skills researchers need to focus on due to stability and hardware limitations.

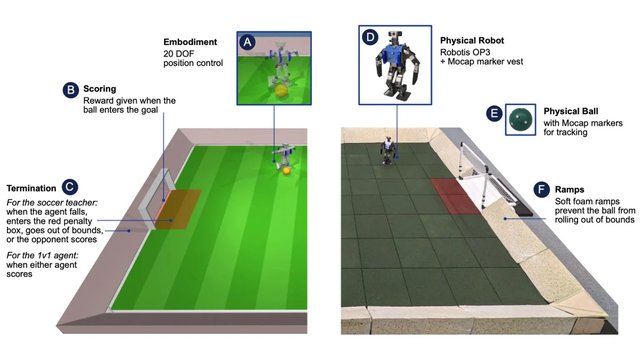

DLR combines two learning strategies to address these challenges. Utilizing this approach, Google DeepMind researchers attempted to teach inexpensive miniature robots to play soccer and compete in one-on-one matches. The results of these experiments, conducted both in simulated and physical environments, were published in the scientific journal Science Robotics.

The initial focus was on two areas: one was on how robots can stand up from the ground, and the other was on how they can score goals against untrained opponents. Reinforcement learning has proven effective, as the robots quickly learned to stand up, walk, turn, and kick accurately, as well as switch between these actions with ease this is summarized in a study by Interesting Engineering.

Furthermore, a robot was capable of blocking an opponent's shot and predicting the direction of the ball's movement. The experts argue that manually developing these skills would be impractical, as the robot must always respond to circumstances adaptively.

The experts found that the movement strategy developed in simulated environments was easily transferred to real robots. In experimental matches, robots trained with this method moved 181 percent faster, turned 302 percent faster, kicked the ball 34 percent faster, and stood up 63 percent faster after falling, compared to devices playing based on only a script of basic knowledge.

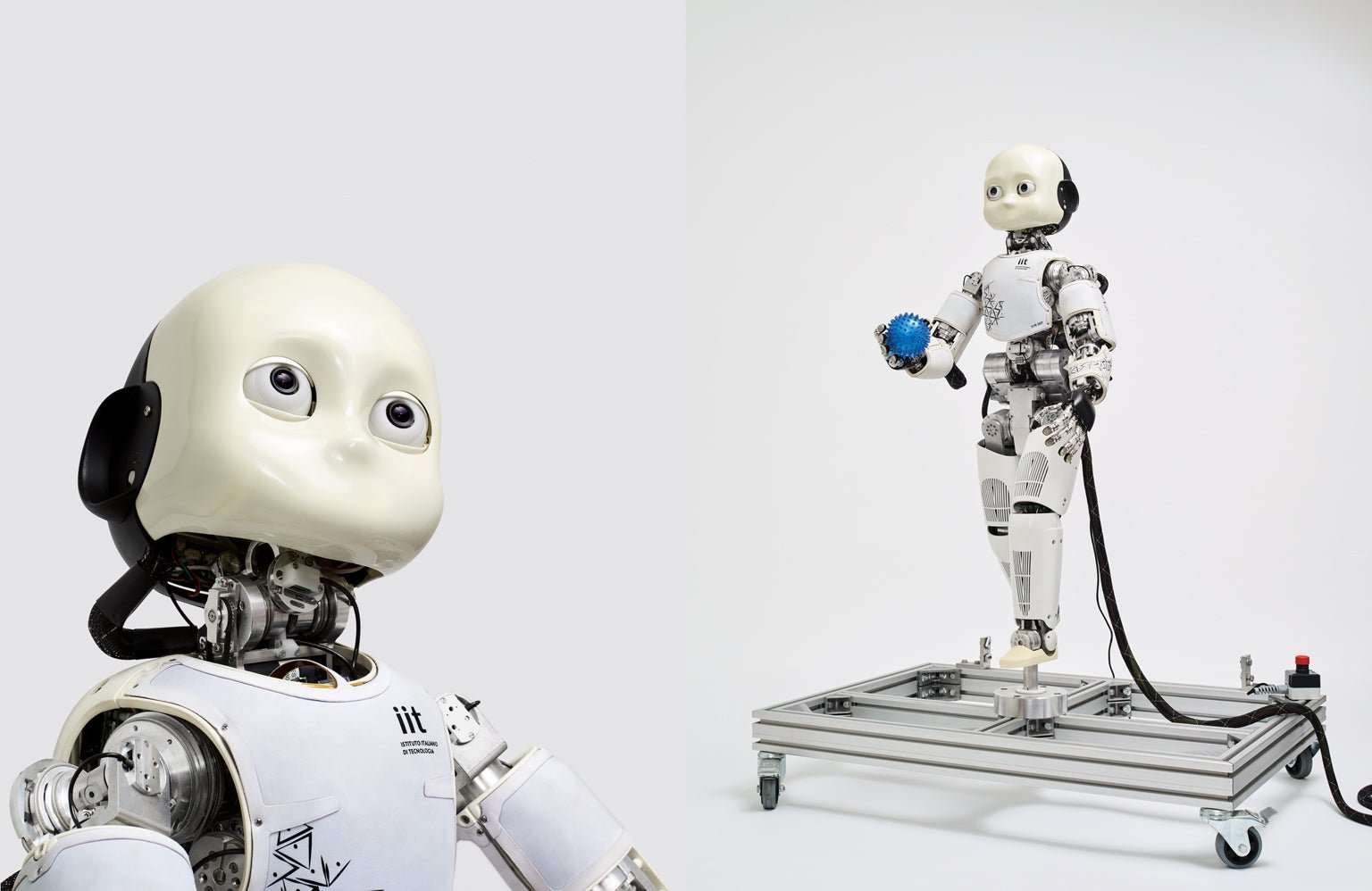

The researchers believe this paves the way for teaching humanoid robots safe movement and sophisticated actions in dynamic environments, heralding a new era in the potential for robotics assistance and cooperation with humans.

The progress of their work was showcased in a soccer match played by robots. While four-legged robots have shown remarkable skill in playing soccer and ball control, their bipedal counterparts still prove clumsy. This is primarily due to the basic skills researchers need to focus on due to stability and hardware limitations.

DLR combines two learning strategies to address these challenges. Utilizing this approach, Google DeepMind researchers attempted to teach inexpensive miniature robots to play soccer and compete in one-on-one matches. The results of these experiments, conducted both in simulated and physical environments, were published in the scientific journal Science Robotics.

The initial focus was on two areas: one was on how robots can stand up from the ground, and the other was on how they can score goals against untrained opponents. Reinforcement learning has proven effective, as the robots quickly learned to stand up, walk, turn, and kick accurately, as well as switch between these actions with ease this is summarized in a study by Interesting Engineering.

Furthermore, a robot was capable of blocking an opponent's shot and predicting the direction of the ball's movement. The experts argue that manually developing these skills would be impractical, as the robot must always respond to circumstances adaptively.

The experts found that the movement strategy developed in simulated environments was easily transferred to real robots. In experimental matches, robots trained with this method moved 181 percent faster, turned 302 percent faster, kicked the ball 34 percent faster, and stood up 63 percent faster after falling, compared to devices playing based on only a script of basic knowledge.

The researchers believe this paves the way for teaching humanoid robots safe movement and sophisticated actions in dynamic environments, heralding a new era in the potential for robotics assistance and cooperation with humans.

Translation:

Translated by AI